table of contents

Model Evaluation & Validation: The Data Scientist’s Compass

As a data scientist, you’re essentially a builder, architecting solutions using data. You construct models – algorithms that learn patterns from data to make predictions or decisions. But imagine building a house without inspecting the foundation or testing the structural integrity. That’s where model evaluation and validation come in. They are the critical steps that ensure your model is not just a fancy structure, but a strong, reliable, and valuable asset. They are the compass guiding you through the murky waters of data science, ensuring you stay on course and deliver real-world impact.

Why Model Evaluation & Validation Matters

Model evaluation and validation are the cornerstones of responsible data science. They’re the practices that separate a theoretically sound model from a practically useful one. Failing to evaluate and validate your model is like setting sail without a map or a compass – you might get somewhere, but the chances of getting lost or running aground are significantly higher.

Building Trust in Your Models

Trust is paramount. Stakeholders need to believe in the accuracy and reliability of your models. Rigorous evaluation and validation give you the evidence you need to back up your claims, building trust and fostering confidence in the model’s ability to deliver. It’s about demonstrating that your model isn’t just spitting out random guesses; it’s a well-understood, carefully constructed tool. Think of it as providing receipts – the more you show the work, the more confidence people have in what you’re doing.

Avoiding Costly Mistakes

In the real world, models drive decisions that can have significant consequences. Whether it’s approving a loan, diagnosing a disease, or predicting customer churn, a poorly performing model can lead to errors. These errors can be costly, resulting in financial losses, reputational damage, or even life-threatening situations. Robust evaluation and validation help you catch these issues early on, before they cause real-world harm. It’s like conducting a thorough inspection before a building is occupied – you want to identify any potential problems before they become a disaster.

Driving Business Value

Ultimately, the goal is to create models that provide value. This could mean increasing revenue, reducing costs, improving efficiency, or enhancing customer satisfaction. Model evaluation and validation are essential to ensure that your models are actually delivering on these goals. They help you identify areas for improvement, refine your model, and ultimately maximize its impact on the business. Without it, you’re driving blind, hoping to reach your destination without knowing the best route.

Essential Tasks in Model Evaluation & Validation

The process of model evaluation and validation involves several key tasks, each playing a crucial role in ensuring your model’s reliability and effectiveness. Think of these tasks as the different checkpoints on your data science journey, helping you assess progress and adjust your course as needed.

Defining Evaluation Metrics

Choosing the right metrics is akin to selecting the right tools for a job. The metrics you use determine how you measure your model’s performance and identify its strengths and weaknesses. Without them, you’re essentially working in the dark, unable to gauge your model’s success or areas for improvement.

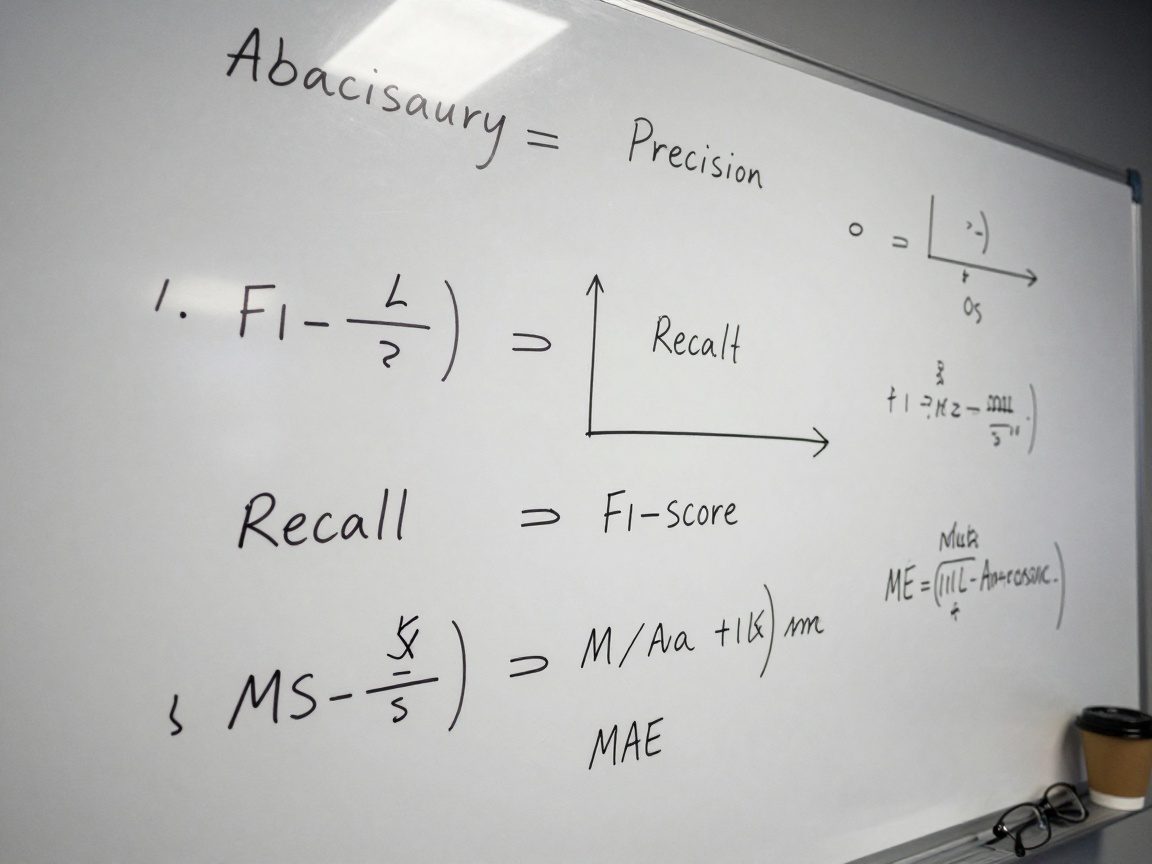

Understanding the Landscape of Metrics

The world of evaluation metrics is diverse, with different metrics designed for different types of problems. For classification tasks (predicting categories), you’ll encounter metrics like accuracy, precision, recall, F1-score, and the area under the ROC curve (AUC-ROC). For regression tasks (predicting continuous values), you might use mean squared error (MSE), mean absolute error (MAE), or R-squared. Understanding these metrics, what they measure, and how they’re calculated is fundamental.

Choosing the Right Metrics for Your Problem

The selection of your metric(s) should align with the goals of your project and the specific characteristics of your data. For instance, in a medical diagnosis setting, you might prioritize recall (the ability to identify all positive cases) over precision (the accuracy of positive predictions) to avoid missing any potential illnesses. On the other hand, in a fraud detection scenario, precision might be more important to minimize false positives, which could inconvenience customers.

Developing Validation Datasets

Before you can evaluate a model, you need data to test it on. The process of splitting your data into different sets allows you to train and evaluate your model without letting it “peek” at the answers during training.

Splitting Your Data: Train, Validation, Test

A common approach is to divide your data into three sets: the training set, the validation set, and the test set. The training set is used to train the model; the validation set is used to tune the model’s hyperparameters and assess its performance during training; and the test set is used for the final evaluation of the model after it’s been fully trained and validated. Think of this as the process of studying, practicing, and then taking the real exam.

Addressing Data Imbalance

In many real-world datasets, some classes or outcomes are more prevalent than others. For example, in fraud detection, fraudulent transactions are usually far less frequent than legitimate ones. If you don’t address this imbalance, your model might perform poorly on the minority class (in this case, fraudulent transactions). Techniques like oversampling, undersampling, or using different cost functions can help mitigate this problem.

Implementing Evaluation Techniques

Once you have your evaluation metrics and validation datasets, it’s time to put them into action. This involves applying various techniques to assess your model’s performance and understand its limitations.

Cross-Validation: The Gold Standard

Cross-validation is a robust technique for estimating how well your model will generalize to unseen data. It involves splitting the data into multiple “folds,” training the model on some folds and evaluating it on the remaining folds. This process is repeated multiple times, with each fold serving as the validation set once. The results are then averaged to get a more reliable estimate of the model’s performance.

Hold-Out Validation: A Simple Approach

Hold-out validation is the simplest technique, where you split your data into training and validation sets once. You train the model on the training set and evaluate it on the validation set. While simpler, it might be less reliable than cross-validation, especially if the data is limited.

Analyzing and Interpreting Results

The numbers you get from your evaluation metrics are just the starting point. You need to analyze them carefully to understand what they mean and what they tell you about your model’s performance.

Identifying Strengths and Weaknesses

What are the specific areas where your model excels? Where does it struggle? Examining the results across different metrics and across different subsets of your data can help you identify these strengths and weaknesses. For example, if you’re working on image recognition, you might discover that your model performs well on some types of images but poorly on others.

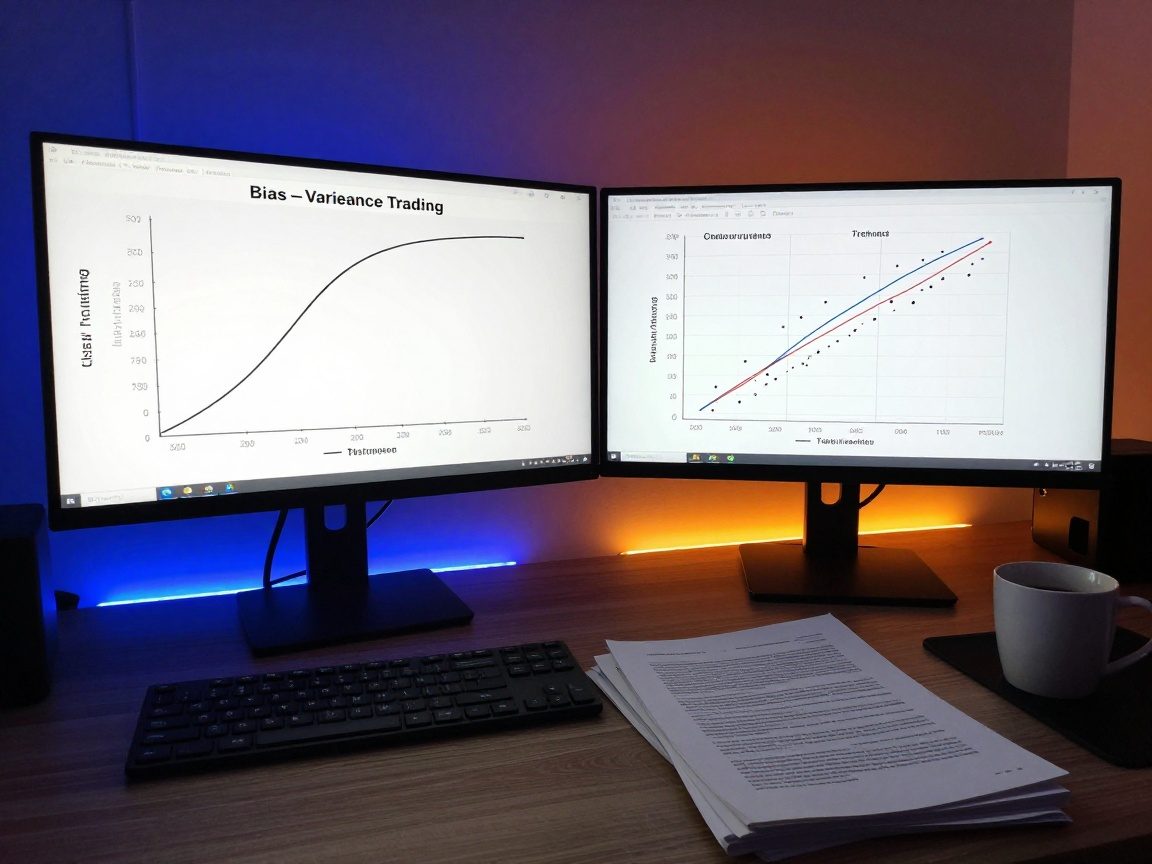

Understanding Bias and Variance

Bias refers to the model’s tendency to consistently make errors in a particular direction. Variance refers to the model’s sensitivity to the training data. High bias and high variance is a common dilemma and it is important to strike a balance in your model. Analyzing bias and variance helps you understand whether your model is underfitting (too simple), overfitting (too complex), or somewhere in between.

Generating Recommendations

Model evaluation and validation are not just about assessing performance; they’re also about taking action. The insights you gain from your evaluation should inform recommendations for how to improve your model.

Iterative Improvement: The Model’s Journey

Data science is an iterative process. The goal is to continuously improve your model, refining its performance and addressing any weaknesses you’ve identified. This could involve trying different algorithms, adjusting hyperparameters, collecting more data, or feature engineering (creating new features from existing ones).

Actionable Insights: What to Do Next

Your recommendations should be specific and actionable. They should outline the steps needed to address any issues you’ve identified and improve the model’s performance. For example, if your model is overfitting, you might recommend regularization techniques or reducing the model’s complexity.

Communicating Findings

The final step is to communicate your findings to stakeholders. Clear, concise, and well-presented results are essential to build trust and to garner support for your model.

Tailoring Your Message to the Audience

The way you communicate your findings should be tailored to your audience. Technical audiences might appreciate detailed statistical reports, while business stakeholders might prefer a summary of key results and actionable insights. Always prioritize clarity and relevance.

Visualizing Results for Impact

Visualizations can be a powerful tool for communicating your findings. Charts, graphs, and other visual aids can help you quickly convey complex information and highlight key trends. For example, a confusion matrix can visually represent the performance of a classification model, while a ROC curve can illustrate its ability to discriminate between classes.

Tools and Technologies for Model Evaluation & Validation

Fortunately, a wealth of tools and technologies are available to facilitate the process of model evaluation and validation. Here are some of the most popular.

Popular Python Libraries

Python is the dominant language for data science, and several powerful libraries can help you with model evaluation and validation:

- Scikit-learn: This library provides a comprehensive suite of tools for machine learning, including various evaluation metrics, cross-validation techniques, and model selection tools.

- Pandas: A versatile data manipulation and analysis library. It’s essential for pre-processing, cleaning, and preparing data for model evaluation.

- Matplotlib and Seaborn: Libraries for creating various charts and graphs to visualize your results.

- TensorFlow and Keras: Deep learning libraries that come with built-in evaluation metrics and functionalities.

Cloud-Based Platforms

Cloud platforms offer powerful tools and resources for data science:

- Amazon SageMaker: Provides a complete end-to-end environment for building, training, and deploying machine learning models.

- Google Cloud AI Platform: Offers a suite of services for machine learning, including model training, evaluation, and deployment.

- Microsoft Azure Machine Learning: A cloud-based platform for building and deploying machine learning models.

Common Pitfalls and How to Avoid Them

Even with the best intentions, it’s easy to make mistakes during model evaluation and validation. Here are some common pitfalls and how to avoid them.

Overfitting and Underfitting

Overfitting occurs when a model learns the training data too well, including its noise and irrelevant patterns. This results in poor performance on unseen data. Underfitting, on the other hand, occurs when a model is too simple to capture the underlying patterns in the data. To avoid these issues, use techniques like regularization, cross-validation, and carefully tune hyperparameters.

Data Leakage

Data leakage occurs when information from the validation or test sets “leaks” into the training set. This can lead to overly optimistic performance estimates. To avoid data leakage, ensure that you’re only using data available at training time when preparing your data. This often means careful handling of feature engineering, data cleaning, and handling external data sources.

Ignoring Business Context

It’s crucial to consider the business context when evaluating your model. Focusing solely on technical metrics without considering the real-world implications of your model’s predictions can lead to undesirable outcomes. Understand the business problem, define your success metrics, and make sure your model is aligned with business goals.

The Future of Model Evaluation & Validation

Model evaluation and validation will continue to evolve in response to new technologies and challenges:

- Explainable AI (XAI): As AI models become more complex, there is a growing need for techniques that can explain their decisions. XAI helps you understand the “why” behind your model’s predictions, improving interpretability and building trust.

- Automated Machine Learning (AutoML): AutoML platforms automate many aspects of the machine learning process, including model selection, hyperparameter tuning, and evaluation.

- Model Monitoring: Once deployed, it’s essential to monitor your models’ performance over time. This involves tracking key metrics, detecting data drift, and retraining models as needed.

The field of model evaluation and validation will stay crucial as data scientists continue to solve more complex problems and rely on the model to drive business value. Mastering these techniques is essential to make a success of your projects and career as a data scientist.

Conclusion

Model evaluation and validation are not mere formalities; they are the bedrock upon which successful data science projects are built. They empower you to build trust, avoid costly mistakes, and drive real business value. By understanding the essential tasks, utilizing the appropriate tools, and avoiding common pitfalls, you can harness the power of data to create models that make a positive impact on the world. Remember, the journey of a thousand models begins with a single validation step. So, embrace the rigor, be meticulous in your analysis, and the results will speak for themselves.

FAQs

-

What is the difference between model evaluation and model validation?

Model evaluation is the general process of assessing a model’s performance using metrics and techniques, such as cross-validation, on a dataset. Model validation is the process of verifying that the model meets the business requirements and is generalizable to new, unseen data, often involving a separate, held-out dataset or real-world testing. -

What are some of the most common evaluation metrics for classification models?

Common metrics include accuracy, precision, recall, F1-score, and the area under the ROC curve (AUC-ROC). The best metric to use depends on the business problem, including any cost/benefit analysis. -

How does cross-validation help in model evaluation?

Cross-validation is a robust technique that helps to estimate how well a model will generalize to unseen data by training and evaluating the model on multiple folds of the data. This approach provides a more reliable estimate of the model’s performance than a single train/validation split. -

What is the role of a validation dataset?

The validation dataset is used to tune the model’s hyperparameters and assess its performance during the training phase. It helps to prevent overfitting by monitoring the model’s performance on unseen data while training. -

How can I avoid data leakage?

To avoid data leakage, ensure that you are only using information available at training time during data preparation and feature engineering. This means separating the data into appropriate training, validation, and test sets and only using the training set for any data transformations.